I haven't posted in a very long time, so i wanted to give you a update what is going on with my project FPL and about myself.

The short version of that is: Since feb 2026 i fully switched from windows 11 to cachyOS, an arch-based operating system and are working very hard to finish up "final_platform_layer" that will be released this year, that contains all backends i want.

The long version are much more complicated, so here it is:

For the last couple of years i was working on several projects - including final platform layer version 0.9.9 which was released in 2025.

This contained a lot of bugfixes and finally had support for multi-channel audio playback - which was very important for me.

After that, i basically lost all motivations to work on any private development at all for many months - due to my daily programming job and other reasons.

Somehow i got over that, i wanted to do code again - but more in a fun way, so i started writing several emulator prototypes and the result was:

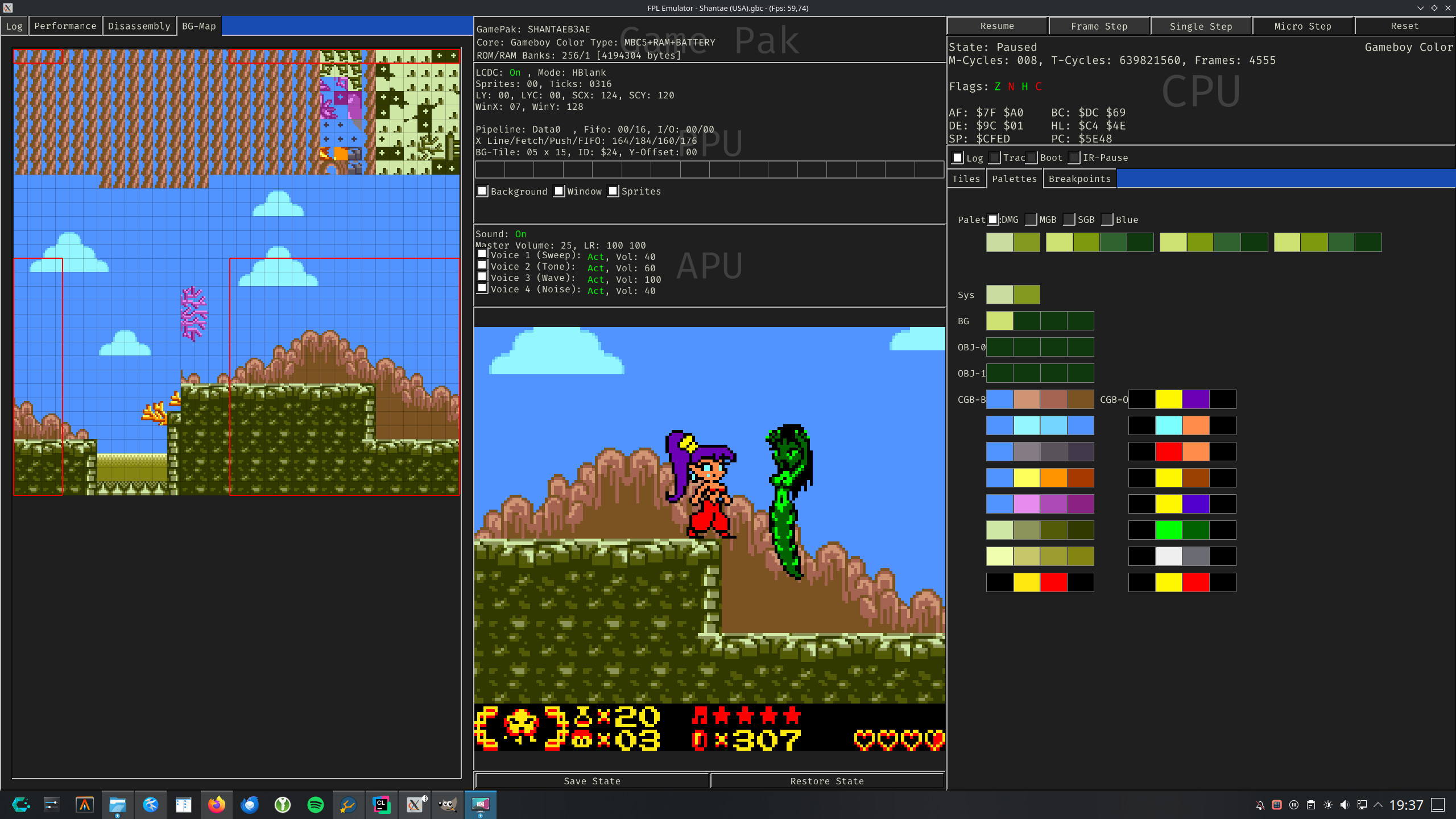

A chip-8 emulator and a crappy game boy emulator that barely works. The chip-8 emulator was easy, but was very limited to the nature of the chip-8 platform.

But i was not satisfied with my game boy emulator, so i watched "The ultimate gameboy talk by CC3C" and several other articles and started over.

After 3 or 4 months i finally had a working game boy emulator that can play a lot DMG games, including sound and i was really happy about it.

That emulator was using FPL as its backbone already, so i decided it to use it as a demo-project for FPL as well.

Since then, it is now included in the "demos/" folder and the emulator itself is its own single-header-file library in the root of the final_game_tech repository.

Seeing a working emulator based on my libraries motivated me a lot, so i started doing more programming again and got back to FPL, fix bugs, added more backends, clean it up, etc.

At the same time i was switching from windows to linux, because i was sick of windows once and for all. Fortuntaly i am very familiar with linux, so switching was not that hard.

In addition i started using AI for troubleshoot linux issues or help me in understanding stuff better.

Of course i am also using AI as a tool to improve my programming skills, learn new techniques, help me find bugs and even implement new features.

For that i use local LLM's and premium AI's that actually costs me money. Fixing bugs is the most valuable for me, because it saves me a ton of time and headaches.

Now we are in the second quarter of 2026 and FPL got a ton of updates:

- PulseAudio backend

- PipeWire backend

- Reworked Input System

- Much better joypad linux input support

- DirectInput support

- Tons of fixes and updates related to linux and POSIX

The source file is now very huge with 1,2 MB and 33k lines of code - but it is still compiling very fast due to its single-header-file nature.

My gameboy emulator called "Final Game Box" is now complete and even supports game boy color.

It is by far not comparable against well-known emulators such as sameboy, mGBA, etc. but it can run and play tons of games, including my favourite game "Shantae" ;-)

So the plan is to finish FPL this year - releasing version 1.0 that contains everything that me and users wanted for this library, but still be bare-bone like FPL was meant to be.

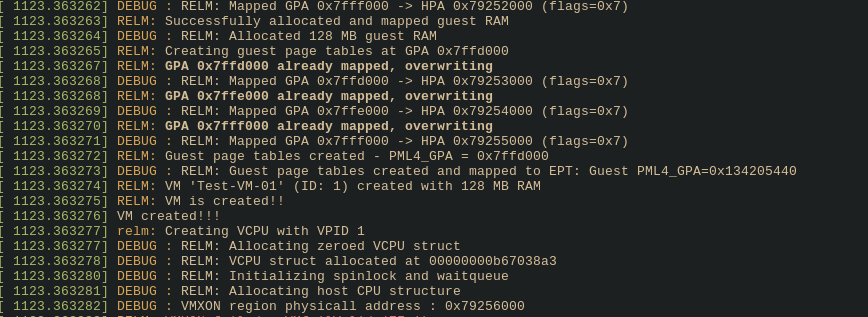

Of course, windows will still be supported. For that reason i have a full windows virtualized setup with GPU passthrough, so i can work on FPL inside windows as well.

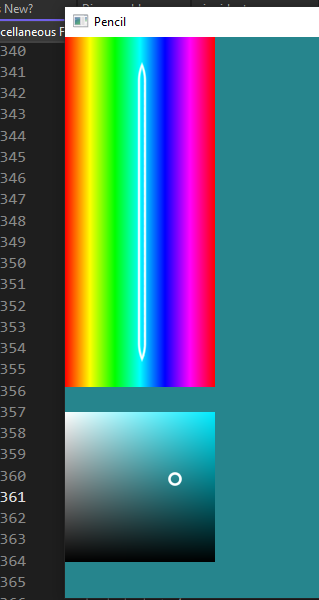

But my main focus is linux and unix now using CLion as my primary IDE.

Stay tuned for updates.